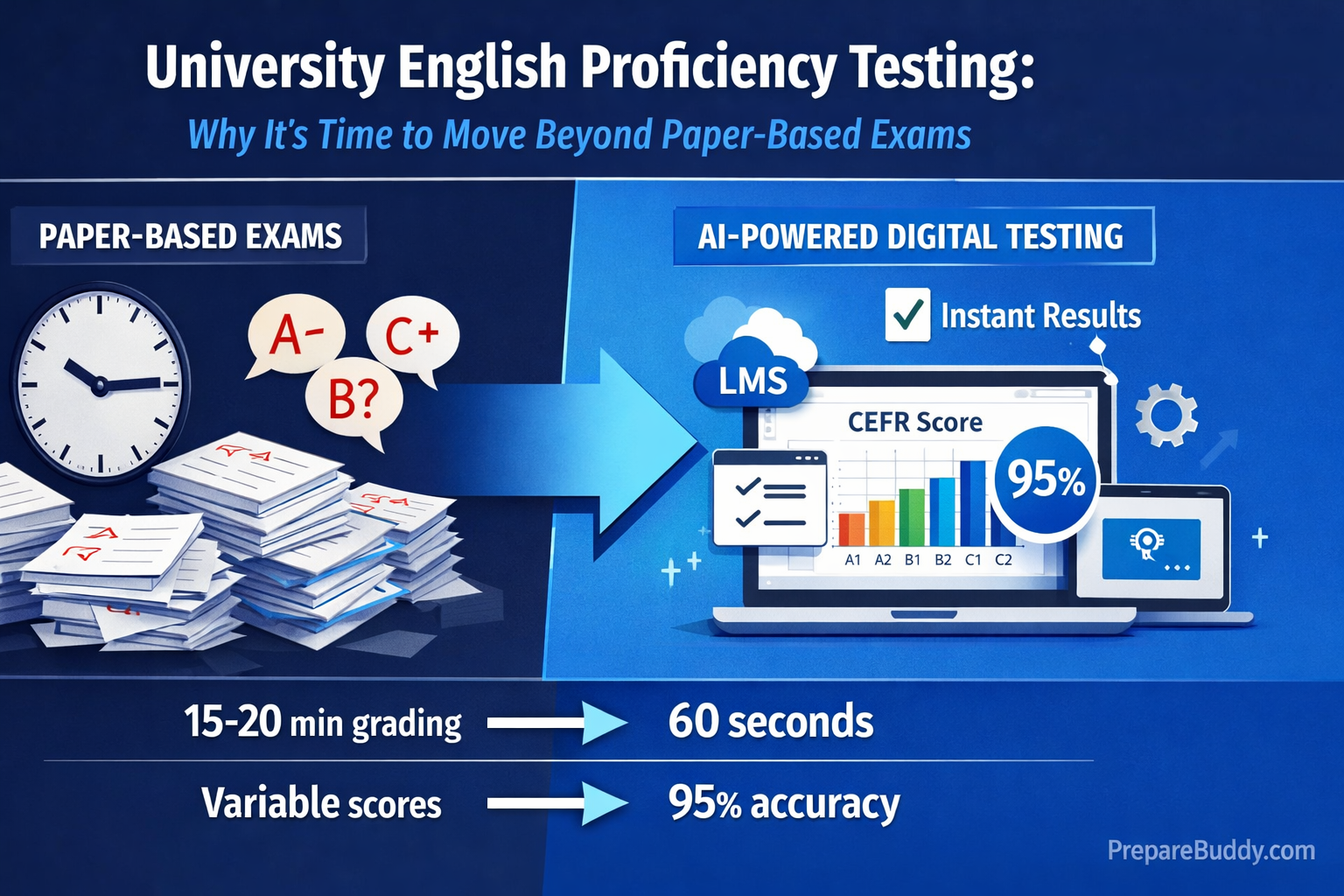

A single university department can process over 500 English proficiency assessments per semester. When each takes 15–20 minutes of faculty time to grade manually, that's more than 160 hours of instructor capacity consumed by a task that AI can handle in under 60 seconds per submission—with 95% scoring accuracy.

For university administrators weighing the switch from paper-based English exams, the question is no longer if digital testing is viable, but how quickly it can be deployed without disrupting existing academic workflows.

The Real Cost of Paper-Based English Proficiency Exams

Paper-based testing creates compounding inefficiencies that stretch far beyond grading time. Consider the full lifecycle of a single exam cycle at a mid-sized university:

| Task | Paper-Based | AI-Powered Digital |

|---|---|---|

| Exam creation & printing | 2–3 weeks | AI-generated in minutes |

| Exam administration | Room booking, invigilators, logistics | Online, anytime access |

| Grading per submission | 15–20 minutes | 30–60 seconds |

| Score consistency across graders | Variable (subjective) | 95% alignment with human raters |

| Results delivery | 1–3 weeks | Instant |

| Grade appeal audit trail | Manual, paper-based | Full digital evidence trail |

| LMS grade entry | Manual data entry | Automatic passback via LTI |

The hidden cost isn't just time—it's inconsistency. When 20+ instructors grade the same rubric, subjective interpretation creates disparities that lead to grade disputes, student complaints, and accreditation concerns.

What University English Proficiency Testing Looks Like in 2026

Modern institutional English testing has moved well beyond multiple-choice scanning. AI-powered platforms now assess all four language skills—reading, writing, listening, and speaking—using adaptive question types that adjust difficulty in real time based on student performance.

CEFR-Aligned Scoring Across 11 Languages

Rather than proprietary score scales that only work within one test system, leading platforms now map results to the Common European Framework of Reference (CEFR)—the international standard used by universities, immigration authorities, and employers worldwide.

| CEFR Level | Proficiency | Typical University Requirement |

|---|---|---|

| C2 | Mastery | Postgraduate research programs |

| C1 | Advanced | Most master's programs |

| B2 | Upper Intermediate | Undergraduate admission |

| B1 | Intermediate | Foundation/pathway programs |

| A2 | Elementary | Pre-sessional English courses |

| A1 | Beginner | Language course placement |

PrepareBuddy's Adaptive Language Proficiency module supports testing across 11 languages—including Chinese, Spanish, French, Hindi, Japanese, Korean, German, and Arabic—using 18 distinct question types across reading, writing, listening, and speaking. Every assessment is CEFR-calibrated, giving institutions a standardized proficiency framework regardless of the target language.

How AI-Powered Assessment Actually Works for Universities

The shift to AI-powered assessment isn't about replacing faculty judgment—it's about scaling consistent evaluation while freeing instructors for higher-value work like mentoring and curriculum development.

RAG-Enhanced Evaluation: Your Standards, Not Generic AI

Generic AI grading sounds generic. Students and faculty can tell. The most effective institutional AI assessment systems use Retrieval-Augmented Generation (RAG)—a technique where the AI references your institution's own exemplary submissions before evaluating new work. Upload 50–100 graded examples, tag them by quality level, and the system learns what "High Distinction" looks like at your institution.

The result? Feedback that sounds like it came from your best graders, because it literally references your standards. Every evaluation includes specific citations from the student's submission mapped against rubric criteria—making grades defensible during appeals.

Multi-Model Verification for High-Stakes Decisions

Single-model AI can be inconsistent. For critical assessments, multi-model verification runs an independent second evaluation pass. When scores from the primary and verification rounds diverge beyond a threshold, the submission is flagged for human review. This approach achieves 95% alignment with human grader standards—compared to roughly 85% for single-model systems.

Quick Placement Without the Full Exam

Not every assessment needs to be a 60-minute formal exam. Diagnostic placement tests provide a 10–20 minute proficiency snapshot covering all four skills, generating a CEFR level that's accurate enough for course placement and pathway decisions.

This is particularly valuable at the start of each semester, when hundreds of international students need placement simultaneously. Instead of blocking faculty time for a full week of placement testing, a diagnostic assessment can run online, at scale, with instant CEFR results.

Speaking Assessment: The Hardest Skill to Test at Scale

Speaking has always been the bottleneck in language proficiency testing. It requires one-on-one examiner time, scheduling coordination, and subjective scoring. AI voice technology has changed this fundamentally.

PrepareBuddy's Voice AI enables live conversational speaking assessment with:

- Real-time pronunciation scoring across 30+ English accents

- 48-emotion detection that captures confidence, anxiety, and engagement patterns

- CEFR-aligned evaluation of fluency, vocabulary range, grammar accuracy, and comprehension

- Multilingual conversation practice in all 11 supported languages

Students converse naturally with an AI that adapts its complexity to match their proficiency level—no rigid exam scripts, no scheduling conflicts, no examiner fatigue.

LMS Integration: Grades Flow Automatically

Technology adoption fails when it creates more work, not less. That's why LTI 1.3 integration with existing Learning Management Systems is non-negotiable for university deployment.

| LMS Platform | Integration Features |

|---|---|

| Canvas | SSO, automatic grade passback, deep linking, assignment selection |

| Moodle | SSO, automatic grade passback, assignment linking |

| Blackboard | SSO, automatic grade passback, assignment linking |

| D2L Brightspace | SSO, automatic grade passback, assignment linking |

| Schoology | SSO, automatic grade passback, assignment linking |

Students authenticate through their existing university credentials. Grades sync directly to the LMS gradebook with automatic retry on failure. No manual data entry, no CSV exports, no grade transcription errors.

Academic Integrity Built In

Digital testing raises valid concerns about cheating. Effective platforms address this with layered integrity measures—tab switch detection, copy-paste monitoring, session fingerprinting, answer timing analysis, and statistical pattern detection. Every incident is logged with forensic evidence, giving administrators a clear record for academic integrity proceedings.

Batch Processing for End-of-Semester Crunch

The real test of any assessment platform is end-of-semester volume. PrepareBuddy's parallel processing architecture handles large batches efficiently:

| Batch Size | Processing Time |

|---|---|

| 1–20 submissions | ~2 minutes |

| 21–100 submissions | ~8 minutes |

| 100+ submissions | ~20 minutes |

Each evaluation includes per-criterion scores, evidence-based citations, strengths, areas for improvement, and actionable recommendations—delivered to students automatically via customizable email templates or through the LMS.

How to Get Started

Transitioning from paper-based to AI-powered English proficiency testing doesn't require a multi-year IT project. PrepareBuddy deploys in 24–48 hours with full white-label branding—your university's domain, logo, colors, and branded communications. Zero PrepareBuddy branding visible to students or faculty.

A typical implementation follows this path:

- Discovery: Requirements gathering, LMS audit, rubric review

- Configuration: LTI setup, rubric migration, reference library building

- Pilot: Single course or department trial with faculty training

- Rollout: Phased expansion across departments

With no lock-in contracts, a free first month, and no credit card required, the barrier to running a pilot is essentially zero.

The Bottom Line for University Decision-Makers

Universities that have adopted AI-powered assessment report a 75% reduction in grading time, 95% AI scoring accuracy through multi-model verification, and a complete evidence trail that eliminates successful grade disputes. Faculty capacity freed from routine grading gets redirected to student mentoring, research, and curriculum innovation.

The institutions still running paper-based English proficiency exams aren't saving money—they're spending more per assessment while delivering slower, less consistent results.

Ready to explore AI-powered English proficiency testing for your university? Schedule a demo to see how 200+ institutions have already made the switch, or explore the university solutions page for a detailed feature overview.

Join the Discussion