A multinational company hires 200 employees across five countries. Half claim "fluent English" on their resumes. Three months later, client-facing teams are missing deadlines because of miscommunication, internal documentation is riddled with errors, and the L&D department has no objective way to measure who actually needs language training — or whether the training is working.

This scenario plays out in organizations worldwide. According to industry research, language barriers cost businesses an estimated 2-3% of annual revenue through miscommunication, rework, and lost opportunities. Yet most companies still rely on self-reported proficiency or outdated paper-based placement tests that tell managers almost nothing actionable.

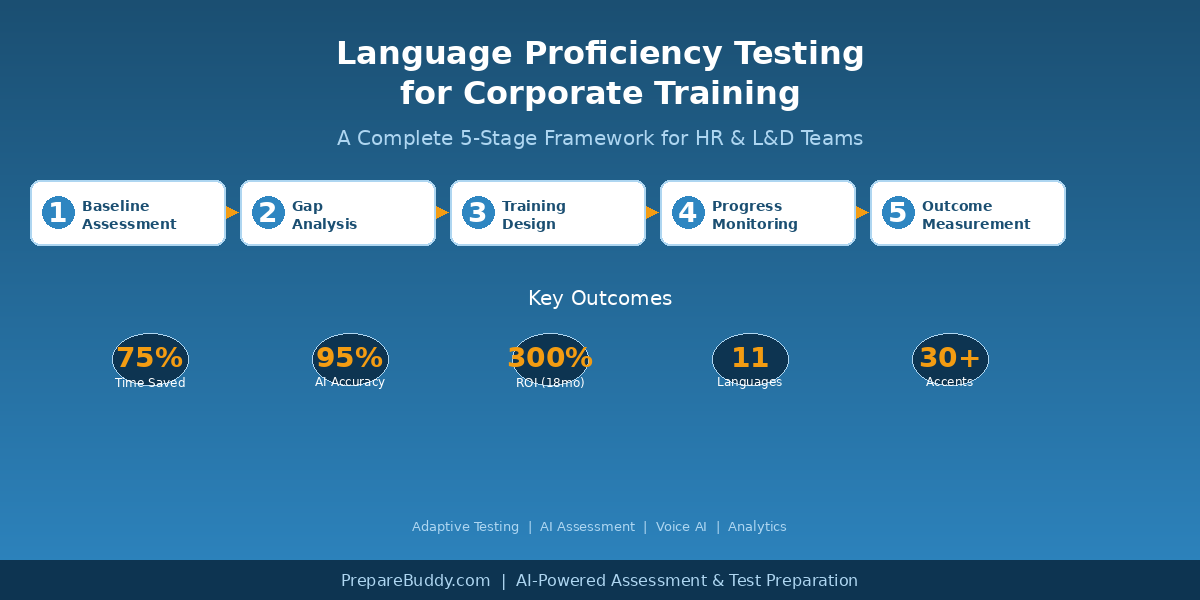

This guide provides a complete framework for implementing language proficiency testing in corporate training programs — from choosing the right assessment approach to measuring ROI.

Why Corporate Language Testing Matters in 2026

The shift to global remote teams has made language proficiency more critical than ever. When your engineering team in Vietnam collaborates with product managers in Germany and clients in Australia, "good enough" English is no longer good enough. Organizations need precise, measurable language data to make informed decisions about hiring, training, and team composition.

Three forces are driving the adoption of structured corporate language testing:

1. Remote and hybrid work amplifies communication gaps. In-person interactions allow for body language, whiteboard explanations, and real-time clarification. Remote communication — emails, Slack messages, video calls — depends almost entirely on language proficiency. A small gap in writing or speaking ability becomes a significant productivity drain when it affects every interaction.

2. Regulatory and client requirements are tightening. Industries like healthcare, aviation, maritime, and financial services increasingly mandate specific language proficiency levels for compliance. Companies serving international clients face contractual requirements around communication standards.

3. Training budgets demand accountability. L&D teams can no longer justify language training spend without measurable outcomes. Executives want to see pre-training baselines, post-training improvements, and clear connections between language proficiency and business metrics.

The Corporate Language Testing Framework: 5 Stages

| Stage | Purpose | Key Activities | Timeline |

|---|---|---|---|

| 1. Baseline Assessment | Measure current proficiency across the organization | Deploy adaptive language tests to all employees in target roles | Week 1-2 |

| 2. Gap Analysis | Identify where proficiency falls below role requirements | Map CEFR levels to job functions, flag critical gaps | Week 2-3 |

| 3. Training Design | Build targeted interventions based on data | Group employees by level, assign personalized learning paths | Week 3-4 |

| 4. Progress Monitoring | Track improvement during training | Periodic reassessment, AI-scored practice, analytics review | Ongoing |

| 5. Outcome Measurement | Quantify ROI and inform future training cycles | Compare pre/post scores, correlate with business KPIs | Quarterly |

Stage 1: Baseline Assessment — Getting Accurate Data

The foundation of any corporate language program is accurate baseline data. Self-assessment is unreliable — research consistently shows employees overestimate their proficiency by 1-2 CEFR levels. You need an objective, standardized assessment that measures all four language skills: reading, writing, listening, and speaking.

What to look for in a corporate language assessment tool:

Adaptive testing technology adjusts question difficulty in real-time based on employee responses. This means a C1-level employee does not waste time on A2 questions, and a B1-level employee is not frustrated by C2-level content. Adaptive tests are faster (typically 30-45 minutes vs. 2+ hours for static tests) and more accurate because they zero in on each person's actual level. PrepareBuddy's adaptive language testing system uses this approach, supporting 11 languages with CEFR-aligned assessments from A1 to C2.

AI-powered scoring eliminates the bottleneck of human raters for writing and speaking sections. With 95% scoring accuracy through multi-model verification, AI assessment delivers consistent, unbiased results at scale — whether you are testing 50 employees or 5,000. This is particularly important for organizations that need to assess large cohorts quickly during onboarding or annual reviews.

Speaking assessment with Voice AI evaluates pronunciation, fluency, vocabulary range, and grammatical accuracy in real-time. PrepareBuddy's Voice AI technology supports 30+ English accents and uses 48-emotion detection to assess not just what employees say, but how confidently and naturally they communicate — a critical factor in client-facing roles.

Stage 2: Gap Analysis — Mapping Proficiency to Role Requirements

Raw CEFR scores become actionable when mapped to specific job functions. Not every role requires the same proficiency level. A software developer writing technical documentation needs strong B2-level writing skills, while a sales executive presenting to international clients needs C1-level speaking fluency.

| Role Category | Minimum CEFR Level | Critical Skills | Priority |

|---|---|---|---|

| C-Suite / Senior Leadership | C1 | Speaking, Writing | High |

| Sales / Business Development | C1 | Speaking, Listening | High |

| Client Support / Account Management | B2+ | Speaking, Writing, Listening | High |

| Project Management | B2 | Writing, Speaking | Medium |

| Engineering / Technical | B2 | Reading, Writing | Medium |

| Operations / Back-Office | B1 | Reading, Writing | Lower |

The gap analysis reveals which employees need training, what specific skills to focus on, and how to prioritize training spend for maximum business impact.

Stage 3: Training Design — Personalized Learning at Scale

Generic language courses waste time and money. An employee struggling with pronunciation does not need grammar drills. A strong speaker who cannot write professional emails needs targeted writing practice, not conversation classes.

Modern corporate language training should be personalized based on assessment data. AI-generated study plans adapt to each employee's strengths and weaknesses, creating individualized learning paths that focus on the specific skills their role demands.

Key elements of effective corporate language training design include skill-specific modules that target the exact gaps identified in the assessment, regular practice with instant AI feedback so employees can improve between formal training sessions, and progress benchmarks tied to business-relevant scenarios rather than abstract grammar rules.

Stage 4: Progress Monitoring — Measuring What Matters

Periodic reassessment is essential. Monthly or quarterly adaptive tests track whether employees are improving, plateauing, or regressing. Analytics dashboards give L&D managers real-time visibility into team-wide progress, individual performance trends, and areas requiring additional intervention.

Effective progress monitoring answers three questions: Are employees improving? Are they improving in the right skills? Is the rate of improvement sufficient to meet business goals?

Stage 5: Measuring ROI — Connecting Language to Business Outcomes

The final stage connects language proficiency improvements to business metrics. Organizations using AI-powered assessment platforms report significant returns:

| Metric | Typical Impact | How to Measure |

|---|---|---|

| Assessment time saved | 75% reduction vs. manual testing | Compare hours spent on testing before/after |

| Grading consistency | 95% AI scoring accuracy | Benchmark AI scores against expert raters |

| Training ROI | Up to 300% within 18 months | Cost of training vs. productivity improvements |

| Employee satisfaction | 95% satisfaction rate | Post-assessment surveys and feedback |

| Manager time saved | 18+ hours/week on grading | Track time allocation before/after automation |

Choosing the Right Platform: What HR and L&D Teams Should Evaluate

When selecting a corporate language testing platform, prioritize these capabilities:

Multi-skill assessment: The platform should test reading, writing, listening, and speaking — not just grammar or vocabulary in isolation. Real-world communication requires all four skills working together.

Scalability: Can the platform handle batch assessments of hundreds or thousands of employees simultaneously? Enterprise-grade platforms are designed for large-scale deployment with 24-48 hour setup times.

White-label capability: Many organizations want assessments branded to their company, not a third-party provider. White-label platforms offer 100% custom branding — your domain, logo, colors, and branded communications — so employees experience a seamless, professional testing environment.

CEFR alignment: Ensure the assessment maps to the Common European Framework of Reference (CEFR), the international standard for language proficiency. This provides a universally understood benchmark that HR teams, managers, and employees can all interpret consistently.

Analytics and reporting: L&D managers need more than individual scores. They need team-level dashboards, trend analysis, gap visualization, and exportable reports for executive stakeholders.

Getting Started: A 30-Day Implementation Plan

Week 1: Define role-specific proficiency requirements. Map each job function to minimum CEFR levels for reading, writing, listening, and speaking.

Week 2: Deploy baseline assessments. Use adaptive testing to assess all employees in target roles. With AI-powered platforms, this can be done remotely — employees complete assessments on their own schedule.

Week 3: Analyze results and design training. Review the gap analysis, group employees by proficiency level and training needs, and create personalized learning paths.

Week 4: Launch training and establish monitoring cadence. Set up quarterly reassessments and configure analytics dashboards for ongoing tracking.

Take the First Step

Language proficiency testing does not have to be complicated or expensive. Modern AI-powered platforms make it possible to assess your entire organization in days, not months — with accuracy that matches expert human raters at a fraction of the cost.

Whether you are building a corporate language program from scratch or upgrading from outdated testing methods, the key is starting with accurate baseline data and building a measurement framework that connects language skills to business outcomes.

Schedule a demo to see how adaptive language testing and AI-powered assessment can transform your corporate training program, or explore pricing to find the right plan for your organization.

Join the Discussion