A coaching center in Hyderabad once graded 400 IELTS mock tests in a weekend. By Monday, the top three scorers had identical essay structures — and two of them sat different exams, in different batches, on different days. Without forensic evidence of what happened during those exams, the center had no way to prove anything. They quietly dropped those scores from their reports and moved on.

This is the quiet crisis of online assessment in 2026. Institutions have scaled up remote and hybrid mock tests, but most platforms still rely on tab-switch monitoring alone — the equivalent of watching a classroom through a keyhole. Serious online exam cheating detection needs more than that. It needs a forensic evidence trail, real-time prevention, and the kind of depth that holds up when a student appeals a result or a university partner audits your process.

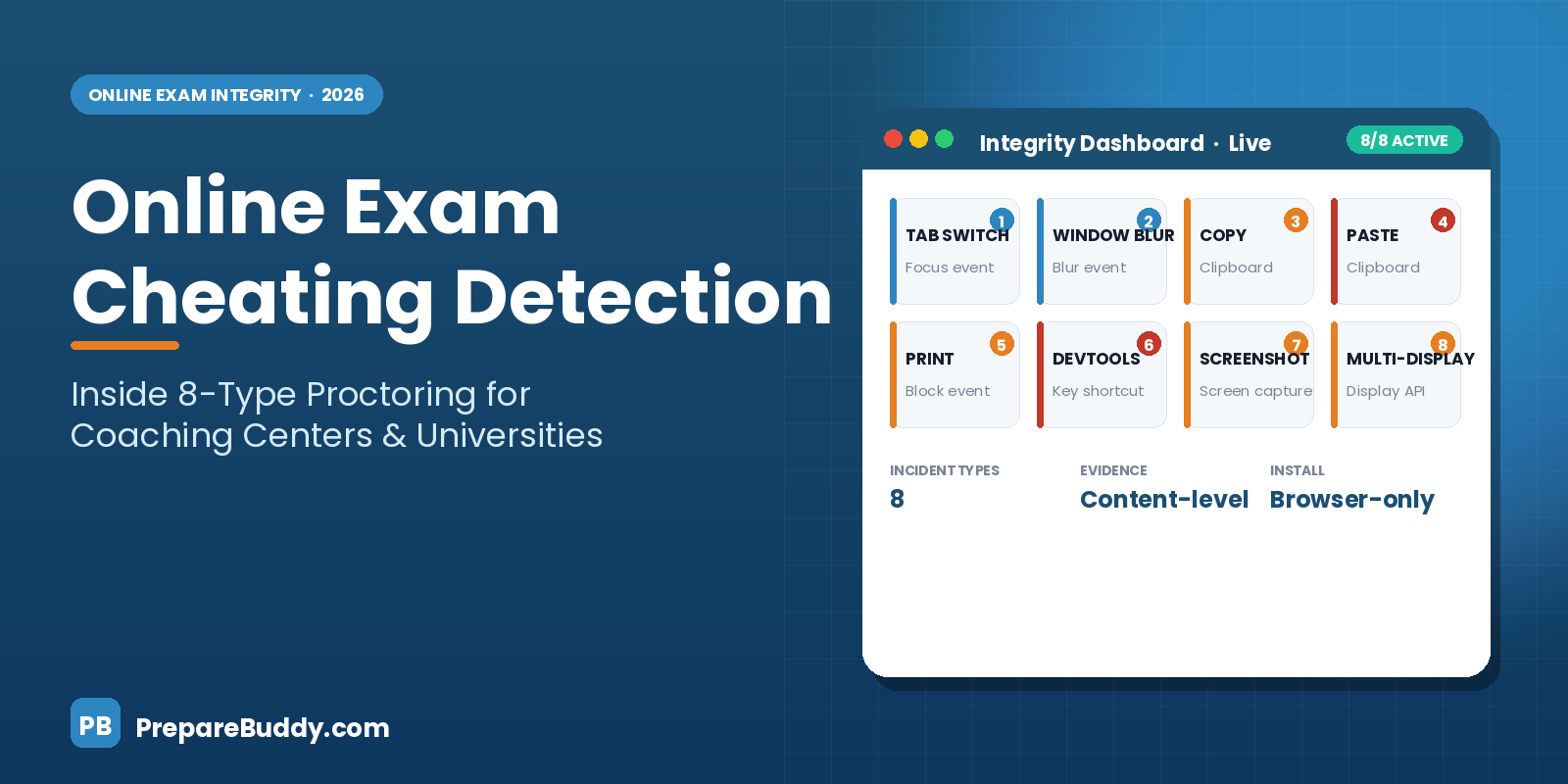

This guide breaks down how modern proctoring works, what 8-type cheating detection actually catches, and how coaching centers and universities can run high-integrity online exams without installing extra software on student devices.

Why Online Exam Cheating Detection Matters More in 2026

The assessment workload has shifted. Coaching centers routinely run online mock batches for IELTS, PTE, TOEFL, and Duolingo. Universities deliver quizzes and language proficiency checks remotely. Consultants need credible score reports to recommend students to university partners. When a score is questioned, "we trust them" isn't a defensible answer — the institution needs data.

At the same time, generative AI has made casual cheating trivial. A student with a second monitor, an open browser on another device, or a quick clipboard paste can meaningfully inflate a score. Most legacy proctoring tools detect one or two of these behaviors. They miss the rest, and they rarely produce evidence a reviewer can actually use.

The bar has moved. Online exam cheating detection in 2026 needs to answer three questions at once: What happened? When did it happen? What is the evidence?

The 8 Types of Cheating Detection That Actually Matter

Not all incident types are equal. Some are low-signal (a brief tab switch while a tired test-taker checks the time). Others are high-signal (a clipboard paste of 200 words into an essay box). A complete proctoring system should detect all eight, and log evidence for each.

Here is the breakdown of what PrepareBuddy's integrity engine catches, how it detects each behavior, and the evidence it collects. This is the full 8-type cheating detection matrix that powers mock tests on our white-label platform.

| Incident Type | Detection Method | Evidence Collected | Risk Level |

|---|---|---|---|

| Tab Switch | Focus event monitoring | Timestamp, duration | Medium |

| Window Blur | Blur event monitoring | Element clicked | Medium |

| Copy Attempt | Clipboard monitoring | Content attempted | High |

| Paste Attempt | Clipboard monitoring | Content pasted | Critical |

| Print Attempt | Print event blocking | Timestamp | High |

| DevTools Open | Keyboard shortcut detection | Key combination | Critical |

| Screenshot | Screen capture detection | Timestamp | High |

| Multiple Monitor | Display API | Screen count | High |

The important thing here is not the list — it's the evidence collected column. A reviewer doesn't just see "paste attempt flagged." They see the exact content pasted, the timestamp, and the field where it landed. That evidence trail is what makes appeals resolvable and audits survivable.

How PrepareBuddy Compares to Traditional Proctoring Tools

Coaching centers and universities often ask: "We already use ProctorU or Examity for big exams. Why look at anything else for mocks and internal assessments?"

The honest answer: legacy proctoring tools were built for a different use case — high-stakes, low-volume certification exams where it's acceptable to install software on a student's machine and have a human proctor watch a webcam feed. That model breaks down for mock tests, practice exams, and institutional language proficiency checks where volume is high and friction needs to be low.

| Feature | PrepareBuddy | ProctorU | Examity |

|---|---|---|---|

| 8+ Incident Types | Yes (all 8) | Partial | Partial |

| Forensic Evidence | Yes (content-level) | Yes (video) | Yes (video) |

| No Extra Software | Yes (browser-only) | No (install required) | No (install required) |

| Built-in Review Dashboard | Yes | Yes | Yes |

| Per-incident Cost | Included | Per-session fee | Per-session fee |

| White-Label Ready | Yes (full rebrand) | No | No |

For mock tests, diagnostics, and institutional assessments, the "no extra software + included per incident" combination is decisive. Students can take the exam in any modern browser, and the institute doesn't pay a per-session proctoring fee on top of platform costs.

What Actually Happens Inside a Proctored Online Exam

Here's the sequence of events from the moment a student clicks "Start Exam" to the moment the coordinator reviews the result:

Pre-exam checks. The browser verifies display count (to catch a pre-opened second monitor), confirms the browser is in focus, and loads the question paper in a secured exam mode. If an anomaly is detected before the exam starts, the student is asked to close extra displays or applications.

Continuous event monitoring. Once the exam starts, PrepareBuddy's integrity engine listens for focus events, blur events, clipboard API calls, print events, keyboard shortcuts associated with developer tools, and screen-capture requests. Each event is stamped with a timestamp and attached to the specific question the student was viewing at the time.

Real-time prevention for critical incidents. Copy, paste, print, and developer-tools events are blocked in real time — not just logged. A student who tries to paste an AI-generated answer sees the field refuse the paste and a warning appears. The attempt is still recorded as evidence.

Post-exam review dashboard. After submission, coordinators see a timeline of every incident per student, the affected questions, the exact content involved, and a risk score. Instead of combing through video, reviewers see a one-page summary that surfaces the highest-risk attempts first.

The Business Case for Institutions

Online exam cheating detection is often framed as a "defense" investment. It's actually a growth investment, because score credibility drives three commercial outcomes institutions care about: student retention, partner trust, and enterprise contracts.

| Institution Type | What Proctoring Unlocks | Why It Matters |

|---|---|---|

| Coaching Centers | Defensible score reports across online and hybrid batches | Stops score disputes; protects teacher time |

| Education Consultants | Credible diagnostic scores for university recommendations | Builds trust with university partners |

| Universities | Remote English proficiency testing at scale | Replaces paper-based exam logistics |

| Language Institutes | High-integrity CEFR proficiency certification | Enables branded testing as a revenue line |

Institutions on our platform report 75% time saved on grading and 300% ROI within 18 months. A meaningful portion of that ROI comes from eliminating the manual overhead of chasing disputed scores and re-administering compromised exams.

The Privacy and Consent Question

Any honest conversation about online exam cheating detection has to address privacy. Students should know what's being monitored, and institutions should never collect more data than they need. PrepareBuddy's approach is deliberately narrow:

No camera feed is required. No microphone is recorded. No keystroke logger runs in the background. No personal files are accessed. The integrity engine only listens for browser-level events that relate directly to the exam — tab switches, clipboard actions, display changes, and print or developer-tool shortcuts. Every incident is tied to a specific exam session and deleted on the institution's schedule.

This narrower approach is one of the reasons institutions can deploy proctored mocks without legal review bottlenecks that often slow down camera-based proctoring rollouts.

How to Roll Out Proctored Online Exams at Your Institute

If you're a coaching center, consultant, or university administrator planning to introduce proctored online assessments, the rollout is simpler than most teams expect on the PrepareBuddy platform. The platform deploys in 24-48 hours, fully white-labeled under your own brand.

Start with a single test type — IELTS or PTE mocks are good candidates because they already have clear section structures and existing score bands. Configure the integrity rules (which incidents auto-flag, which auto-block, which require coordinator review), run a calibration batch with your existing students, and review the evidence dashboard with your academic team before expanding to new test types.

Within two batches, teachers will notice that essays stop being suspiciously similar, and score distributions start looking the way they should — bell-curved, not left-skewed toward the ceiling.

The Bottom Line

Online exam cheating detection in 2026 is not about catching students. It's about protecting the credibility of every score your institution issues. When a student goes to a university with a PTE 79 or an IELTS 7.5 from your mock batch, that score needs to mean something — to the student, to the university partner, and to every prospective student who hears about your pass rates.

Eight incident types, content-level evidence, browser-only deployment, and a forensic review dashboard give institutions what they actually need: defensible scores, low friction, and a clear audit trail.

Ready to see proctored mock tests in action? Schedule a demo to walk through the 8-type detection engine with your academic team, or explore the white-label platform for coaching centers to see how it deploys under your own brand in 48 hours. If you're a university assessing at scale, our university solutions page covers batch assessment and remote English proficiency testing specifically. You can also try a proctored practice test yourself to see the experience from the student side.

Join the Discussion